Astro vs Lovable for SEO: Why Static Sites Win

My pillar page monitoring script fired at 3am. The page was gone from Google. Here's what a rendering issue costs you — and why I chose Astro when I built something I actually care about ranking.

Contents

I build web projects — mostly personal, a couple for clients. For most of them I use Lovable for front-end decisions: layout, components, design. Then I connect the repo to GitHub so Claude Code can handle the back end. Fast to spin up, clean separation, and I stay in control of the logic.

Then one morning my pillar page monitoring script fired and my OpenClaw agent pushed the alert to Telegram. My pillar page had disappeared from Google.

Not penalized. Not a manual action. Just gone — as if it never existed.

What Actually Happened

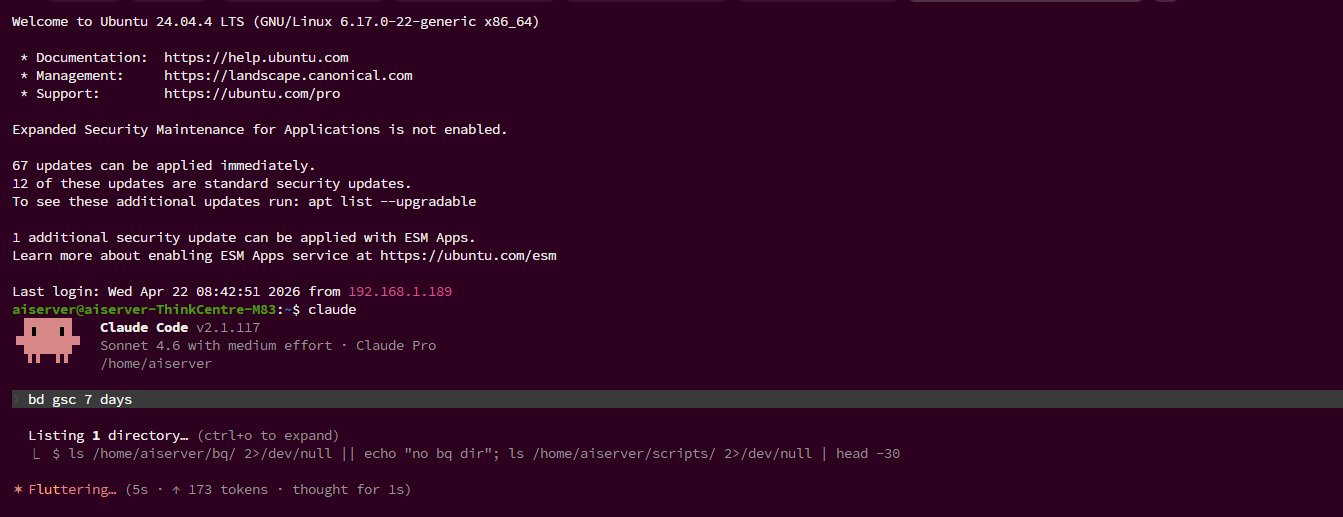

The pillar page script monitors ranking position and index status on my most important pages. When it dropped out of the index, the script fired immediately and sent an alert to Telegram via the bot API. OpenClaw summarized the alert. I took that summary to Claude Code running in my terminal — it has full memory of my projects, access to the GitHub repo and GSC API — and it investigated, pulled the data, and identified the rendering issue. I had a clear diagnosis without having to dig through Search Console manually.

Rendering issue. The page was built on a CSR stack — React, client-side rendered. Googlebot had visited, got a near-empty HTML shell, and moved on before JavaScript had a chance to build the DOM. At some point Google stopped waiting and dropped the page entirely.

The fix took less than an hour. Two hours after pushing it, the page was back — not just recovered, but at position 2. It had been sitting at position 3 before the incident. Fixing the rendering issue properly actually moved it up.

That’s the thing about CSR: it doesn’t just risk a penalty. It quietly suppresses pages that should be ranking, and you won’t know until they disappear.

The fix was a custom prerender layer — a static HTML snippet injected directly into the React root div under the id ssr-prerender. Googlebot sees real content immediately. Real users get the React app as normal, which replaces it on load. It works. But it’s a patch, not a solution — something you bolt on after the fact, maintain separately, and have to remember every time you add a new page or change content.

When I decided to build this blog — a place to document agentic AI workflows that I actually want people to find — I wasn’t going to patch my way through it again.

The Tools I Looked At

Before landing on a stack, I mapped out the real options. Not just “what’s popular” but what the actual tradeoffs are for a content site in 2026.

Lovable

Lovable is a prompt-to-app builder. You describe what you want, it generates a React codebase, you deploy. The UX is genuinely impressive for web apps — forms, dashboards, interactive tools. For a marketing page or a content blog it’s the wrong tool. CSR by default, $25/month, and Google will eventually stop crawling your content if JavaScript rendering is inconsistent.

I still use it for clients who need web apps. I won’t use it for any site where organic search matters.

Cursor

Cursor is a VSCode fork with an embedded AI assistant. It’s excellent for developers — autocomplete, inline edits, multi-file refactors. The key distinction: you’re still driving. Cursor helps you write code faster, but you’re making the architectural decisions, reviewing diffs, committing changes. It doesn’t choose a framework for you or build a deployment pipeline.

For someone comfortable in a terminal, Cursor is a productivity multiplier. It’s not opinionated about your output format, which means the SEO tradeoffs are entirely yours to manage.

Google Antigravity

Antigravity launched in November 2025 — Google’s answer to Cursor, built on a VSCode fork with Gemini 3 baked in. Free tier, agent-first design. The agent can take longer autonomous runs than Cursor: plan a feature, write the files, run the tests, iterate. It’s closer to what I was already doing with Claude Code.

I evaluated it briefly. The free tier is compelling, and Gemini 3 handles code well. What held me back was ecosystem maturity — it’s new, and I’d already built a workflow around Claude Code that I trusted.

What I Actually Use

My setup is deliberately boring:

- ThinkCentre PC, bare metal Ubuntu, no cloud VM

- Claude Code over SSH as the agent

- Astro 4 — SSG, zero client JS by default

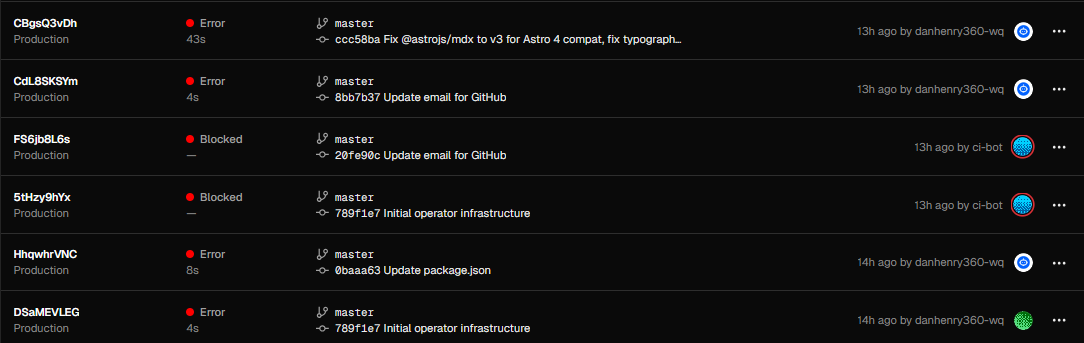

- Vercel for deploy, auto-deploys on every push to master

Claude Code handles file editing, refactoring, and multi-step builds. I review diffs, approve writes, and push. The agent runs in my terminal — no subscription to a hosted IDE, no vendor controlling my environment.

Astro was the obvious framework choice once I ruled out React-based options. Every page is static HTML at build time. No JavaScript ships to the browser unless you explicitly add it. Googlebot sees exactly what a user sees, because there’s no rendering step to skip.

The content schema is straightforward MDX files in src/content/posts/. Writing a new post is dropping a Markdown file and pushing. No CMS, no API calls, no paid tier.

Astro vs Lovable for SEO: The Real Comparison

This is the question that matters if you’re using AI-assisted tools to build content sites.

Lovable is built for speed — you describe a UI, it ships React. That’s genuinely useful for products, dashboards, anything interactive. But React’s default is client-side rendering, which means the server returns a near-empty HTML document and the browser runs JavaScript to build the page. That process works fine for users. It’s unreliable for crawlers.

Googlebot has a two-wave crawl model. First wave: fetch the raw HTML. Second wave: render JavaScript. The second wave happens on a delay — sometimes hours, sometimes days — and isn’t guaranteed for every page on every crawl. For a new site or a page with low crawl budget, Google may only ever see the first wave. On a CSR site, that first wave is an empty shell.

Astro doesn’t have this problem. The HTML is complete at build time. There’s no JavaScript to render because there’s no rendering step — every page is just a file on disk, served directly. Googlebot gets the same document a user gets, every time, on the first request.

The practical difference: an Astro page can be indexed the same day it’s published. A Lovable page might be indexed the same day, or it might take two weeks and require a manual fetch request in Search Console to trigger the second render wave.

For a blog where every post is a potential organic traffic source, that’s not a minor difference.

The SEO Tradeoff That Actually Matters

Here’s the thing nobody says clearly: your platform choice is an SEO decision.

Every framework has a default rendering strategy. If that default is client-side, you’re opting into a crawl risk. You can mitigate it, but you can’t fully eliminate it — and every mitigation adds operational overhead.

Static site generators like Astro, Eleventy, and Hugo don’t have this problem. The HTML is there at request time, before any crawler or user arrives. There’s nothing to render, nothing to wait for, nothing to patch around.

Beyond crawlability, static sites have compounding SEO advantages:

Speed. Astro pages load fast because there’s no JavaScript bundle to parse before content appears. Core Web Vitals — LCP in particular — are significantly easier to hit when you’re serving pre-built HTML. Google uses page experience as a ranking signal. A slow React app is fighting an uphill battle that an Astro site doesn’t have to fight.

Structured data. With Astro you define your JSON-LD schema once in your base layout and it’s present on every page, every build, without relying on a script that might fail to execute. With CSR you’re dependent on JavaScript running correctly for your structured data to be visible to crawlers.

Canonical URLs. Static generation makes canonical URLs predictable and consistent. There’s no dynamic routing that might generate duplicate URLs under different parameters, no JavaScript redirects that crawlers might not follow.

For a content blog that needs to rank, none of this is optional. It’s the baseline.

What I’d Tell Someone Starting Now

If you’re building a web app — a tool, a dashboard, something with real interactivity — Lovable is a legitimate choice. Ship fast, iterate, worry about SEO when the product is solid.

If you’re building a content site — a blog, a documentation site, a resource hub where organic traffic is the point — don’t use a CSR framework as your foundation. The crawl risk is real, the fixes are patches, and you’ll spend time maintaining workarounds that a static site simply doesn’t need.

Astro is free, deploys to Vercel in one click, and handles all the SEO fundamentals out of the box. The learning curve is light if you know HTML and basic JavaScript. The tradeoff is that it’s less impressive to demo than a prompt-to-app builder — but it’s built for content that actually gets found.

Read part 2 to see how I built this blog in a single Claude Code session.